3.1 General description

To be successful in the next task, the robots will be tested and evaluated how good they are in recognizing new possible obstacles. Therefore, set of images (one after another) will be placed in front of the robot, each of them from one of the three groups: a deer, a human and something else. The robots must have an acoustic and / or visual indicator that will let the jury / audience know what the robot sees: a human, a deer or unknown.

To make the competition as fare as possible, each of the teams will provide 3 images before the start of the tasks. A random selection will then be made where one of these 3 images will be used in the final set (so if they are 15 teams competing, the set will consist of 15 images). All images will then be printed (each) on a white A3 sheet of paper and place in random order in front of the robot at a distance of 1.5 m. The robots then have a 5 sec time frame to make a detection, recognize it and indicate what they see.

For this task, the robots will be place in the beginning of the field between the two plant rows, but will not move / drive during this task. Instead, the robots will focus on what is placed in front of them and make a classification. Only one classification per obstacle can be made and cannot be changed (only the first counts).

3.2 Rules for robots

Each robot must start the detection after a starting indication (acoustic signal) within 1 min. The maximum available time for sensing is 5 seconds per obstacle. There can be up to 10 seconds long window to change the pictures by the jury (by first removing the previous picture and placing the new). Once a detection is made, it cannot change in next 5 seconds. Only the first detection counts.

3.3 Points distribution

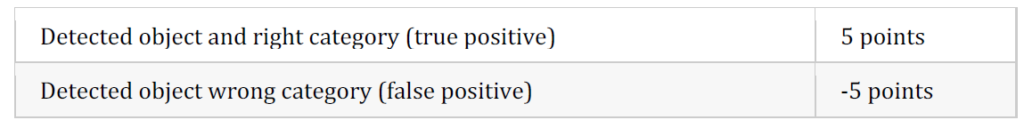

The jury assesses the detection and classification during the run:

Example images for task 3: https://fieldrobot.nl/event/index.php/2023/05/05/task-3-example-images/