1.1 General description

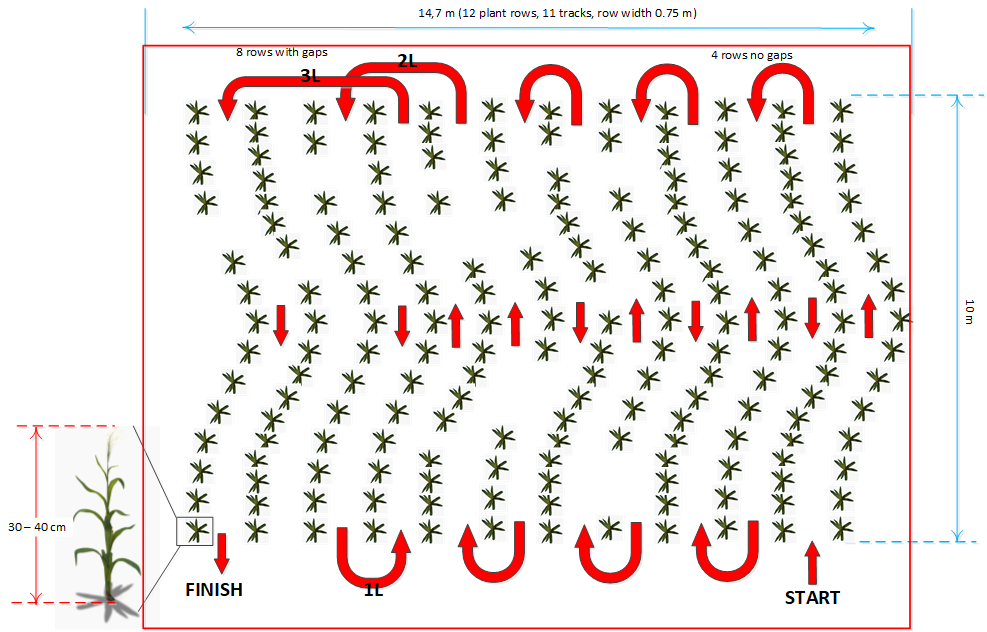

For this task, the robots are navigating autonomously through a real maize field. Turning must follow adjacent rows for track 1 to 5. From exiting track 5 the robot must follow a given particular turning pattern. This task is all about accuracy, smoothness, and speed of the navigation operation between the rows. Within three minutes the robot navigates between the rows. The aim is to cover as much travelled distance as possible. You find an example field and driving pattern in Figure 1.1.

The first 3 tracks are without intra-row gaps to make it easy for robots to start. The rest of the field – track 4 to 11 – there are intra-row gaps even sometimes on both sides. In the last part – after track 5 – the robot has to follow a particular given turning and row pattern. The pattern may look as: S – 1L – 1R – 3L – 2L – 2R – F.

Random stones and pebbles are placed along the path. Therefore, machine ground clearance is required. In order to make it easier for sensors there will be no gaps at the row entries and exits. The ends or beginnings of the rows may not be in the same line. The headland will be perhaps indicated by a fence or ditch or similar.

1.2 Rules for robots

Each robot must start after a starting indication (acoustic signal) within 1 min. The maximum

available time for the run is 3 min.

1.3 Points distribution

The distance travelled following the given path during task duration is measured. (As soon as the robot leaves the specified path, the distance measurement will stop.) The final distance will be calculated including especially a bonus factor when the end of the field is reached in less time than 3 min. The final distance including a bonus factor is calculated as:

Sfinal [m] = Scorrected [m] * 3 [min] / tmeasured [min]

The corrected distance includes travelled distance and the penalty values. Travelled distance,

penalty values and performance time are measured by the jury officials.

Crop plant damage by the robot will result in a penalty of 2% of total row length distance in

meter per damaged plant. (Example: 10 rows x 10 m = 100 m max. distance, means a penalty

of 2 m per damaged plant.)

Figure 1.1: Concept of field structure for navigation task (example); Track 1 to 3 with no gaps, track 4 to 11 with gaps. After track 7 on navigation with pattern 2L (second left), 1L (one left) and 3L (third left) as an example. The headlands are 2 m wide.