General description

The robots shall detect objects as weeds and beer cans (example for waste) and map or geo-reference them. The coordinate system shall be locally in horizontal field dimensions. The reference point will be a point marked by pillars with a QR code for camera detection and a pole for e.g. LIDAR detection. Task 3 is conducted in an environment similar to task 2. Nevertheless, good row navigation is required. There will be nine (9) objects in total distributed across the virtual field.

The robot has to generate a file (*.csv) with detected classified objects and their coordinates relative to the given reference point. Each object should be reported on one line in the file including the coordinates x and y in horizontal plane in meters with 3 decimal points and its kind (table 5). Extra points can be obtained for object classification (weed or waste).

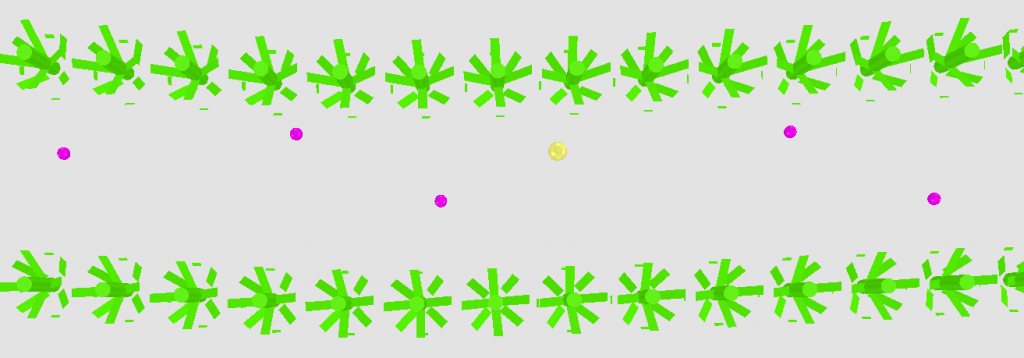

Virtual Field Environment

Objects are realistic weeds and cans e.g. of beer with different brands and colours. The objects will be placed within the rows NOT between the rows. The objects will be placed randomly across the field. So they can be in between and in the rows. No objects are located on the headlands.

Rules for robots

Each robot has only one attempt. For starting, the robot is placed at the beginning of the first row without crossing the white line. The maximum available time for the run is 3 min.

Assessment

The jury assesses the detection during the run:

| Detected object during run (true positive) | 5 point |

| Detected object during run (false positive) | -5 (minus) points |

And assesses the classification and accuracy of mapped objects:

| x: Euclidean distance to object of the same kind* | points |

| x ≤ 2cm | 15 |

| 2 cm < x ≤ 37.5 cm | 15.56 – 0.2817 * x |

| x > 37.5 cm (false positive) | -5 |

*(distance error to the nearest object of the same kind)

Crop plant damage by the robot will result in a penalty of 4 points per plant.

The task completing teams will be ranked by the number of points as described above. The best 3 teams will be rewarded.

| X | Y | Kind |

| 2.645 | 3.583 | weed |

| 3.804 | 3.537 | weed |

| 4.894 | 3.562 | waste |

Table 5 – Example for a map file, recorded in Task 3